Yesterday at work, I saw an article over at Ars Technica that I wanted to read. It was a news update on the substitute teacher who was convicted of showing porn to students after the spyware infected class PC started showing porn pop-up images. If you aren’t already familiar with the story, there are a large number of articles on the story’s beginning and evolution over at boingboing. I had already read some about the latest in the story – Ms. Amero has been granted a new trial in place of the sentencing she was supposed to receive today – but wanted to read the Ars Technica take on this simply because I respect the authors at Ars and value their views.

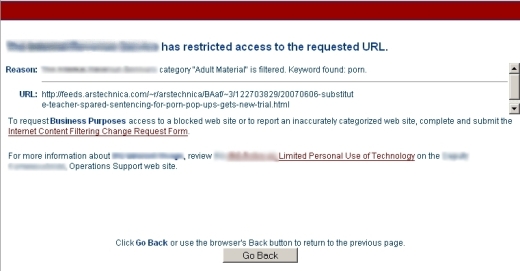

Rather than getting to read the full Ars story, however, I get the following block page (Click ‘More’ for image – click the image for a larger view).

Yes, I have been blocked from reading a story at a techie site because the word “porn” appears in the URL. This is a classic example of stupid filtering. What makes this so damn funny to me is that just a few minutes before trying to access Ars, I was reading commentary by Cory Doctorow on the ineffectiveness and dangerousness of internet filtering. I wanted to post an article about it here, but wasn’t sure what my lead-in would be. Even though I can simply post such stories as short notes for my right column Asides section, I wanted to say something about it to highlight the importance of bad internet filtering (for values of bad meaning all). Internet filtering is an ineffective technology. More legitimate content is blocked than is reasonable. Vast regions of the interpipes are left unblocked because the blocking databases cannot keep up and the automated analyzers fail so frequently. Needed web content cannot be accessed due to poor automated analyzers (e.g., at one point, AOL filtering blocked the word breast from chatrooms, which caused problems for the breast-cancer survivors group).

But saying something intelligent and compelling is difficult, because I’m operating from a different level of understanding of this technology than most folks. I know I can handle the web access my own children do, because I have tools to monitor what they are doing. My wife and I are with or near our children when they are online. In the future, it will be harder to prevent them from accessing things that we don’t want, but I can know what they are doing and deal with that when it is a problem. Most parents don’t have the technical and networking background I have so they can do the same. While I try to speak about technology in a manner that non-techies can understand, I think the fallacy of internet filtering software is something I would not be able to do justice and present in a non-technical manner. Since Cory has expressed some of the problems so well, however, I can take the fallback position of pawning my readers off on someone else for explanation.

People say bad things online. They write vile lies about blameless worthies. They pen disgusting racist jeremiads, post gut-churning photos of sex acts committed against children, and more sexist and homophobic tripe than you could read – or stomach – in a lifetime. They post fraudulent offers, alarmist conspiracy theories, and dangerous web pages containing malicious, computer-hijacking code.

It’s not hard to understand why companies, government, schools and parents would want to filter this kind of thing. Most of us don’t want to see this stuff. Most of us don’t want our kids to see this stuff – indeed, most of us don’t want anyone to see this stuff.

But every filtering enterprise to date is a failure and a disaster, and it’s my belief that every filtering effort we will ever field will be no less a failure and a disaster. These systems are failures because they continue to allow the bad stuff through. They’re disasters because they block mountains of good stuff. Their proponents acknowledge both these facts, but treat them as secondary to the importance of trying to do something, or being seen to be trying to do something. Secondary to the theatrical and PR value of pretending to be solving the problem.

. . .

The same companies that supply the world’s torturers and totalitarians are also supplying our schools, workplaces, and cities. I edit a popular website, Boing Boing, that is widely censored by these firms. One firm, SmartFilter, regularly classifies us as “adult” because less than one per cent of the tens of thousands of posts we’ve made over the years feature thumbnail-sized nudes, including Michelangelo’s David (Smartfilter maintains that any page containing David’s willy is a “nudity” page). Another, Dan’s Guardian, is employed by the City of Boston for its citywide network – it is so indiscriminate that it banned Boing Boing because we linked to a page of Google search results that had the “SafeSearch” option switched off, meaning that it might contain a link to an adult site. (Dan’s Guardian also banned downloads of my 2004 novel Eastern Standard Tribe).

There is more to the article, including more examples of filtering failures that block legitimate content and a commentary on why the companies producing this faulty software continue to do so. Head over and read the full story at The Guardian web site.

[tags]Internet filtering software is faulty and ineffective, Commentary on the failures of internet filtering[/tags]